Table Of Contents

- What is SEO?

- ‘User Experience’ Matters

- Balancing Conversions With Usability & User Satisfaction

- Google Wants To Rank High-Quality Websites

- Google Is Not Going To Rank Low-Quality Pages When It Has Better Options

- Technical SEO

- What Is Domain Authority?

- Is Domain Age An Important Google Ranking Factor?

- Google Penalties For Unnatural Footprints

- Ranking Factors

- Keyword Research

- Do Keywords In Bold Or Italic Help?

- How Many Words & Keywords Do I Use On A Page?

- What is The Perfect Keyword Density?

- ‘Things, Not Strings’

- Can I Just Write Naturally and Rank High in Google?

- Do You Need Lots of Text To Rank Pages In Google?

- Optimise For User Intent & Satisfaction

- Optimising For ‘The Long Click’

- Page Title Element

- Meta Keywords Tag

- Meta Description Tag

- Robots Meta Tag

- Robots.txt File

- H1-H6: Page Headings

- Alt Tags

- Link Title Attributes, Acronym & ABBR Tags

- Search Engine Friendly URLs (SEF)

- Absolute Or Relative URLs

- Subdirectories or Files For URL Structure

- Which Is Better For Google? PHP, HTML or ASP?

- Does W3C Valid HTML / CSS Help Rank?

- Point Internal Links To Relevant Pages

- Link Out To Related Sites

- Broken Links Are A Waste Of Link Power

- Does Only The First Link Count In Google?

- Duplicate Content Penalty

- Double or Indented Listings in Google

- Redirect Non-WWW To WWW

- 301 Redirects Are POWERFUL & WHITE HAT

- Canonical Link Element Is Your Best Friend

- Do I Need A Google XML Sitemap For My Website?

- Rich Snippets

- Dynamic PHP Copyright Notice in WordPress

- Adding Schema.org Mark-up to Your Footer

- Keep It Simple, Stupid

- How Fast Should Your Website Download?

- A Non-Technical Google SEO Strategy

- What Not To Do In Website Search Engine Optimisation

- Don’t Flag Your Site With Poor Website Optimisation

- The Continual Evolution of SEO

- Beware Pseudoscience

- How Long Does It Take To See Results?

- What Makes A Page Spam?

- If A Page Exists Only To Make Money, The Page Is Spam, to Google

- Doorway Pages

- A Real Google Friendly Website

- Google Webmaster Guidelines

- Free SEO EBOOK (2016) PDF

What is SEO?

Search Engine Optimisation in 2017 is a technical, analytical and creative process to improve the visibility of a website in search engines. Its primary function is to drive more visits to a site that converts into sales.

The free SEO tips you will read on this page will help you create a successful SEO friendly website yourself.

I have over 15 years experience making websites rank in Google. If you need optimisation services – see my SEO audit or small business seo services.

An Introduction

This article is a beginner’s guide to effective white hat SEO.

I deliberately steer clear of techniques that might be ‘grey hat’, as what is grey today is often ‘black hat’ tomorrow, as far as Google is concerned.

No one-page guide can explore this complex topic in full. What you’ll read here are answers to questions I had when I was starting out in this field.

The ‘Rules.’

Google insists webmasters adhere to their ‘rules’ and aims to reward sites with high-quality content and remarkable ‘white hat’ web marketing techniques with high rankings.

Conversely, it also needs to penalise websites that manage to rank in Google by breaking these rules.

These rules are not ‘laws’, but ‘guidelines’, for ranking in Google; lay down by Google. You should note, however, that some methods of ranking in Google are, in fact, illegal. Hacking, for instance, is illegal in the UK and US.

You can choose to follow and abide by these rules, bend them or ignore them – all with different levels of success (and levels of retribution, from Google’s web spam team).

White hats do it by the ‘rules’; black hats ignore the ‘rules’.

What you read in this article is perfectly within the laws and also within the guidelines and will help you increase the traffic to your website through organic, or natural search engine results pages (SERPs).

Definition

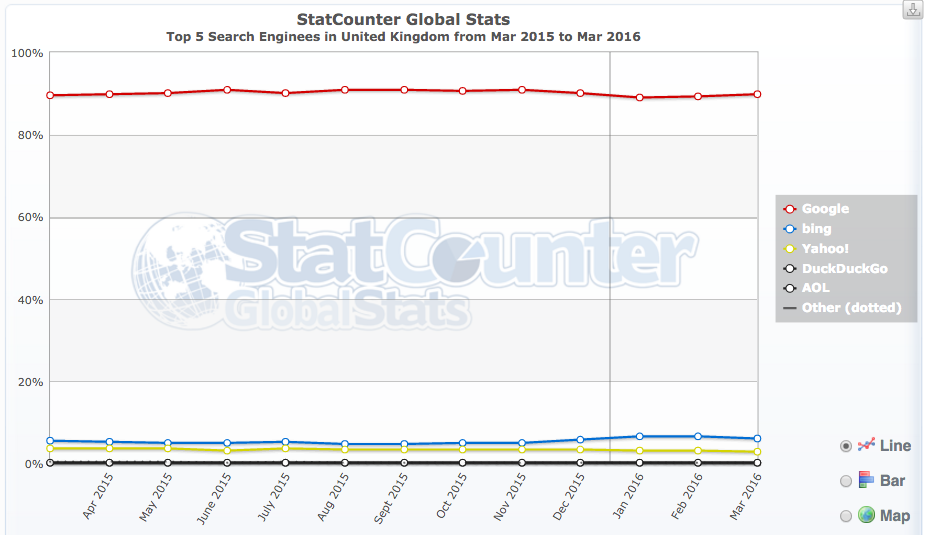

There are a lot of definitions of SEO (spelled Search engine optimisation in the UK, Australia and New Zealand, or search engine optimization in the United States and Canada) but organic SEO in 2017 is mostly about getting free traffic from Google, the most popular search engine in the world (and almost the only game in town in the UK):

Opportunity

The art of web SEO lies in understanding how people search for things and understanding what type of results Google wants to (or will) display to its users. It’s about putting a lot of things together to look for opportunity.

A good optimiser has an understanding of how search engines like Google generate their natural SERPs to satisfy users’ navigational, informational and transactional keyword queries.

Risk Management

A good search engine marketer has a good understanding of the short term and long term risks involved in optimising rankings in search engines, and an understanding of the type of content and sites Google (especially) WANTS to return in its natural SERPs.

The aim of any campaign is more visibility in search engines and this would be a simple process if it were not for the many pitfalls.

There are rules to be followed or ignored, risks to take, gains to make, and battles to be won or lost.

Free Traffic

A Mountain View spokesman once called the search engine ‘kingmakers‘, and that’s no lie.

Ranking high in Google is VERY VALUABLE – it’s effectively ‘free advertising’ on the best advertising space in the world.

Traffic from Google natural listings is STILL the most valuable organic traffic to a website in the world, and it can make or break an online business.

The state of play, in 2017, is that you can STILL generate highly targeted leads, for FREE, just by improving your website and optimising your content to be as relevant as possible for a buyer looking for your company, product or service.

As you can imagine, there’s a LOT of competition now for that free traffic – even from Google (!) in some niches.

You shouldn’t compete with Google. You should focus on competing with your competitors.

The Process

The process can be practised, successfully, in a bedroom or a workplace, but it has traditionally always involved mastering many skills as they arose including diverse marketing technologies including but not limited to:

- Website design

- Accessibility

- Usability

- User experience

- Website development

- PHP, HTML, CSS, etc.

- Server management

- Domain management

- Copywriting

- Spreadsheets

- Backlink analysis

- Keyword research

- Social media promotion

- Software development

- Analytics and data analysis

- Information architecture

- Research

- Log Analysis

- Looking at Google for hours on end

It takes a lot, in 2017, to rank on merit a page in Google in competitive niches.

User Experience

The big stick Google is hitting every webmaster with (at the moment, and for the foreseeable future) is the ‘QUALITY USER EXPERIENCE‘ stick.

If you expect to rank in Google in 2017, you’d better have a quality offering, not based entirely on manipulation, or old school tactics.

Is a visit to your site a good user experience?

If not – beware manual ‘Quality Raters’ and beware the Google Panda/Site Qualityalgorithms that are looking for poor user experience for its users.

Google raising the ‘quality bar’, year on year, ensures a higher level of quality in online marketing in general (above the very low-quality we’ve seen over the last years).

Success online involves investment in higher quality on-page content, website architecture, usability, conversion to optimisation balance, and promotion.

If you don’t take that route, you’ll find yourself chased down by Google’s algorithms at some point in the coming year.

This ‘what is SEO‘ guide (and this entire website) is not about churn and burn type of Google SEO (called webspam to Google) as that is too risky to deploy on a real business website in 2017.

What Is A Successful Strategy?

Get relevant. Get trusted. Get Popular.

It is no longer just about manipulation in 2017.

It’s about adding quality and often useful content to your website that together meet a PURPOSE that delivers USER SATISFACTION.

If you are serious about getting more free traffic from search engines, get ready to invest time and effort in your website and online marketing.

Quality Signals

Google wants to rank QUALITY documents in its results and force those who wish to rank high to invest in higher-quality content or great service that attracts editorial links from reputable websites.

If you’re willing to add a lot of great content to your website and create buzz about your company, Google will rank you high.

If you try to manipulate Google, it will penalise you for a period, and often until you fix the offending issue – which we know can LAST YEARS.

Backlinks in general, for instance, are STILL weighed FAR too positively by Google and they are manipulated to drive a site to the top positions – for a while. That’s why blackhats do it – and they have the business model to do it. It’s the easiest way to rank a site, still today.

If you are a real business who intends to build a brand online – you can’t use black hat methods. Full stop.

Fixing the problems will not necessarily bring organic traffic back as it was before a penalty.

Recovery from a Google penalty is a ‘new growth’ process as much as it is a ‘clean-up’ process.

Google Rankings Are In Constant Ever-Flux

It’s Google’s job to MAKE MANIPULATING SERPs HARD.

So – the people behind the algorithms keep ‘moving the goalposts’, modifying the ‘rules’ and raising ‘quality standards’ for pages that compete for top ten rankings.

In 2017 – we have ever-flux in the SERPs – and that seems to suit Google and keep everybody guessing.

Google is very secretive about its ‘secret sauce’ and offers sometimes helpful and sometimes vague advice – and some say offers misdirection – about how to get more from valuable traffic from Google.

Google is on record as saying the engine is intent on ‘frustrating’ search engine optimisers attempts to improve the amount of high-quality traffic to a website – at least (but not limited to) – using low-quality strategies classed as web spam.

At its core, Google search engine optimisation is still about KEYWORDS and LINKS. It’s about RELEVANCE, REPUTATION and TRUST. It is about QUALITY OF CONTENT & VISITOR SATISFACTION.

A Good USER EXPERIENCE is a key to winning – and keeping – the highest rankings in many verticals.

Relevance, Authority & Trust

Web page optimisation is about making a web page relevant and trusted enough to rank for a query.

It’s about ranking for valuable keywords for the long term, on merit. You can play by ‘white hat’ rules lay down by Google, and aim to build this Authority and Trust naturally, over time, or you can choose to ignore the rules and go full time ‘black hat’.

MOST SEO tactics still work, for some time, on some level, depending on who’s doing them, and how the campaign is deployed.

Whichever route you take, know that if Google catches you trying to modify your rank using overtly obvious and manipulative methods, then they will class you a web spammer, and your site will be penalised ( you will not rank high for relevant keywords).

These penalties can last years if not addressed, as some penalties expire and some do not – and Google wants you to clean up any violations.

Google does not want you to try and modify where you rank, easily. Critics would say Google would prefer you paid them to do that using Google Adwords.

The problem for Google is – ranking high in Google organic listings is a real social proof for a business, a way to avoid PPC costs and still, simply, the BEST WAY to drive VALUABLE traffic to a site.

It’s FREE, too, once you’ve met the always-increasing criteria it takes to rank top.

‘User Experience’ Matters

Is User Experience A Ranking Factor?

User experience is mentioned 16 times in the main content of the quality raters guidelines (official PDF), but we have been told by Google it is not, per say, a classifiable ‘ranking factor‘ on desktop search, at least.

On mobile, sure, since UX is the base of the mobile friendly update. On desktop currently no. (Gary Illyes: Google, May 2015)

While UX, we are told, is not literally a ‘ranking factor’, it is useful to understand exactly what Google calls a ‘poor user experience’ because if any poor UX signals are identified on your website, that is not going to be a healthy thing for your rankings anytime soon.

Matt Cutts consistent SEO advice was to focus on a satisfying user experience.

What is Bad UX?

For Google – rating UX, at least from a quality rater’s perspective, revolves around marking the page down for:

- Misleading or potentially deceptive design

- sneaky redirects (cloaked affiliate links)

- malicious downloads and

- spammy user-generated content (unmoderated comments and posts)

- Low-quality MC (main content of the page)

- Low-quality SC (supplementary content)

What is SC (supplementary content)?

When it comes to a web page and positive UX, Google talks a lot about the functionality and utility of Helpful Supplementary Content – e.g. helpful navigation links for users (that are not, generally, MC or Ads).

Supplementary Content contributes to a good user experience on the page, but does not directly help the page achieve its purpose. SC is created by Webmasters and is an important part of the user experience. One common type of SC is navigation links which allow users to visit other parts of the website. Note that in some cases, content behind tabs may be considered part of the SC of the page.

To summarize, a lack of helpful SC may be a reason for a Low quality rating, depending on the purpose of the page and the type of website. We have different standards for small websites which exist to serve their communities versus large websites with a large volume of webpages and content. For some types of “webpages,” such as PDFs and JPEG files, we expect no SC at all.

It is worth remembering that Good SC cannot save Poor MC (“Main Content is any part of the page that directly helps the page achieve its purpose“.) from a low-quality rating.

Good SC seems to certainly be a sensible option. It always has been.

Key Points about SC

- Supplementary Content can be a large part of what makes a High-quality page very satisfying for its purpose.

- Helpful SC is content that is specifically targeted to the content and purpose of the page.

- Smaller websites such as websites for local businesses and community organizations, or personal websites and blogs, may need less SC for their purpose.

- A page can still receive a High or even Highest rating with no SC at all.

Here are the specific quotes containing the term SC:

- Supplementary Content contributes to a good user experience on the page, but does not directly help the page achieve its purpose.

- SC is created by Webmasters and is an important part of the user experience. One common type of SC is navigation links which allow users to visit other parts of the website. Note that in some cases, content behind tabs may be considered part of the SC of the page.

- SC which contributes to a satisfying user experience on the page and website. – (A mark of a high-quality site – this statement was repeated 5 times)

- However, we do expect websites of large companies and organizations to put a great deal of effort into creating a good user experience on their website, including having helpful SC. For large websites, SC may be one of the primary ways that users explore the website and find MC, and a lack of helpful SC on large websites with a lot of content may be a reason for a Low rating.

- However, some pages are deliberately designed to shift the user’s attention from the MC to the Ads, monetized links, or SC. In these cases, the MC becomes difficult to read or use, resulting in a poor user experience. These pages should be rated Low.

- Misleading or potentially deceptive design makes it hard to tell that there’s no answer, making this page a poor user experience.

- Redirecting is the act of sending a user to a different URL than the one initially requested. There are many good reasons to redirect from one URL to another, for example, when a website moves to a new address. However, some redirects are designed to deceive search engines and users. These are a very poor user experience, and users may feel tricked or confused. We will call these “sneaky redirects.” Sneaky redirects are deceptive and should be rated Lowest.

- However, you may encounter pages with a large amount of spammed forum discussions or spammed user comments. We’ll consider a comment or forum discussion to be “spammed” if someone posts unrelated comments which are not intended to help other users, but rather to advertise a product or create a link to a website. Frequently these comments are posted by a “bot” rather than a real person. Spammed comments are easy to recognize. They may include Ads, download, or other links, or sometimes just short strings of text unrelated to the topic, such as “Good,” “Hello,” “I’m new here,” “How are you today,” etc. Webmasters should find and remove this content because it is a bad user experience.

- The modifications make it very difficult to read and are a poor user experience. (Lowest quality MC (copied content with little or no time, effort, expertise, manual curation, or added value for users))

- Sometimes, the MC of a landing page is helpful for the query, but the page happens to display porn ads or porn links outside the MC, which can be very distracting and potentially provide a poor user experience.

- The query and the helpfulness of the MC have to be balanced with the user experience of the page.

- Pages that provide a poor user experience, such as pages that try to download malicious software, should also receive low ratings, even if they have some images appropriate for the query.

In short, nobody is going to advise you to create a poor UX, on purpose, in light of Google’s algorithms and human quality raters who are showing an obvious interest in this stuff. Google is rating mobile sites on what it classes is frustrating UX – although on certain levels what Google classes as ‘UX’ might be quite far apart from what a UX professional is familiar with in the same ways as Google’s mobile rating tools differ from, for instance, W3c Mobile testing tools.

Google is still, evidently, more interested in rating the main content of the webpage in question and the reputation of the domain the page is on – relative to your site, and competing pages on other domains.

A satisfying UX is can help your rankings, with second-order factors taken into consideration. A poor UX can seriously impact your human-reviewed rating, at least. Google’s punishing algorithms probably class pages as something akin to a poor UX if they meet certain detectable criteria e.g. lack of reputation or old-school SEO stuff like keyword stuffing a site.

If you are improving user experience by focusing primarily on the quality of the MC of your pages and avoiding – even removing – old-school SEO techniques – those certainly are positive steps to getting more traffic from Google in 2017 – and the type of content performance Google rewards is in the end largely at least about a satisfying user experience.

Balancing Conversions With Usability & User Satisfaction

Take pop-up windows or pop-unders as an example:

According to usability expert Jakob Nielson, 95% of website visitors hated unexpected or unwanted pop-up windows, especially those that contain unsolicited advertising.

In fact, Pop-Ups have been consistently voted the Number 1 Most Hated Advertising Technique since they first appeared many years ago.

Accessibility students will also agree:

- creating a new browser window should be the authority of the user

- pop-up new windows should not clutter the user’s screen.

- all links should open in the same window by default. (An exception, however, may be made for pages containing a links list. It is convenient in such cases to open links in another window so that the user can come back to the links page easily. Even in such cases, it is advisable to give the user a prior note that links would open in a new window).

- Tell visitors they are about to invoke a pop-up window (using the link <title> attribute)

- Popup windows do not work in all browsers.

- They are disorienting for users

- Provide the user with an alternative.

It is inconvenient for usability aficionados to hear that pop-ups can be used successfully to vastly increase signup subscription conversions.

EXAMPLE: TEST With Using A Pop Up Window

Pop ups suck, everybody seems to agree. Here’s the little test I carried out on a subset of pages, an experiment to see if pop-ups work on this site to convert more visitors to subscribers.

I tested it out when I didn’t blog for a few months and traffic was very stable.

Results:

Testing Pop Up Windows Results

| Pop Up Window | Total %Change | ||

| WK1 On | Wk2 Off | ||

| Mon | 46 | 20 | 173% |

| Tue | 48 | 23 | 109% |

| Wed | 41 | 15 | 173% |

| Thu | 48 | 23 | 109% |

| Fri | 52 | 17 | 206% |

That’s a fair increase in email subscribers across the board in this small experiment on this site. Using a pop up does seem to have an immediate impact.

I have since tested it on and off for a few months and the results from the small test above have been repeated over and over.

I’ve tested different layouts and different calls to actions without pop-ups, and they work too, to some degree, but they typically take a bit longer to deploy than activating a plugin.

I don’t really like pop-ups as they have been an impediment to web accessibility but it’s stupid to dismiss out-of-hand any technique that works. I’ve also not found a client who, if they had that kind of result, would choose accessibility over sign-ups.

I don’t really use the pop up on days I post on the blog, as in other tests, it really

seemed to kill how many people share a post in social media circles.

With Google now showing an interest with interstitials, I would be very nervous of employing a pop-up window that obscures the primary reason for visiting the page. If Google detects a dissatisfaction, I think this would be very bad news for your rankings.

I am, at the moment, using an exit strategy pop-up window as hopefully by the time a user sees this device, they are FIRST satisfied with my content they came to read. I can recommend this as a way to increase your subscribers, at the moment, with a similar conversion rate than pop-ups – if NOT BETTER.

I think, as an optimiser, it is sensible to convert customers without using techniques that potentially negatively impact Google rankings.

Do NOT let conversion get in the way of the PRIMARY reason a visitor is CURRENTLY on ANY PARTICULAR PAGE or you risk Google detecting relative dissatisfaction with your site and that is not going to help you as Google’s

Google Wants To Rank High-Quality Websites

Google has a history of classifying your site as some type of entity, and whatever that is, you don’t want a low-quality label on it. Put there by algorithm or human. Manual evaluators might not directly impact your rankings, but any signal associated with Google marking your site as low-quality should probably be avoided.

If you are making websites to rank in Google without unnatural practices, you are going to have to meet Google’s expectations in the Quality Raters Guidelines (PDF).

Google says:

Low-quality pages are unsatisfying or lacking in some element that prevents them from achieving their purpose well.

‘Sufficient Reason’

There is ‘sufficient reason’ in some cases to immediately mark the page down on some areas, and Google directs quality raters to do so:

- An unsatisfying amount of MC is a sufficient reason to give a page a Low-quality rating.

- Low-quality MC is a sufficient reason to give a page a Low-quality rating.

- Lacking appropriate E-A-T is sufficient reason to give a page a Low-quality rating.

- Negative reputation is sufficient reason to give a page a Low-quality rating.

What are low-quality pages?

When it comes to defining what a low-quality page is, Google is evidently VERY interested in the quality of the Main Content (MC) of a page:

Main Content (MC)

Google says MC should be the ‘main reason a page exists’.

- The quality of the MC is low.

- There is an unsatisfying amount of MC for the purpose of the page.

- There is an unsatisfying amount of website information.

POOR MC & POOR USER EXPERIENCE

- This content has many problems: poor spelling and grammar, complete lack of editing, inaccurate information. The poor quality of the MC is a reason for the Lowest+ to Low rating. In addition, the popover ads (the words that are double underlined in blue) can make the main content difficult to read, resulting in a poor user experience.

- Pages that provide a poor user experience, such as pages that try to download malicious software, should also receive low ratings, even if they have some images appropriate for the query.

DESIGN FOCUS NOT ON MC

- If a page seems poorly designed, take a good look. Ask yourself if the page was deliberately designed to draw attention away from the MC. If so, the Low rating is appropriate.

- The page design is lacking. For example, the page layout or use of space distracts from the MC, making it difficult to use the MC.

MC LACK OF AUTHOR EXPERTISE

- You should consider who is responsible for the content of the website or content of the page you are evaluating. Does the person or organization have sufficient expertise for the topic? If expertise, authoritativeness, or trustworthiness is lacking, use the Low rating.

- There is no evidence that the author has medical expertise. Because this is a YMYL medical article, lacking expertise is a reason for a Low rating.

- The author of the page or website does not have enough expertise for the topic of the page and/or the website is not trustworthy or authoritative for the topic. In other words, the page/website is lacking E-A-T.

After page content, the following are given the most weight in determining if you have a high-quality page.

POOR SECONDARY CONTENT

- Unhelpful or distracting SC that benefits the website rather than helping the user is a reason for a Low rating.

- The SC is distracting or unhelpful for the purpose of the page.

- The page is lacking helpful SC.

- For large websites, SC may be one of the primary ways that users explore the website and find MC, and a lack of helpful SC on large websites with a lot of content may be a reason for a Low rating

DISTRACTING ADVERTISEMENTS

- For example, an ad for a model in a revealing bikini is probably acceptable on a site that sells bathing suits, however, an extremely distracting and graphic porn ad may warrant a Low rating.

GOOD HOUSEKEEPING

- If the website feels inadequately updated and inadequately maintained for its purpose, the Low rating is probably warranted.

- The website is lacking maintenance and updates.

SERP SENTIMENT & NEGATIVE REVIEWS

- Credible negative (though not malicious or financially fraudulent) reputation is a reason for a Low rating, especially for a YMYL page.

- The website has a negative reputation.

LOWEST RATING

When it comes to Google assigning your page the lowest rating, you are probably going to have to go some to hit this, but it gives you a direction you want to ensure you avoid at all costs.

Google says throughout the document, that there are certain pages that…

should always receive the Lowest rating

..and these are presented below. Note – These statements below are spread throughout the raters document and not listed the way I have listed them there. I don’t think any context is lost presenting them like this, and it makes it more digestible.

Anyone familiar with Google Webmaster Guidelines will be familiar with most of the following:

- True lack of purpose pages or websites.

- Sometimes it is difficult to determine the real purpose of a page.

- Pages on YMYL websites with completely inadequate or no website information.

- Pages or websites that are created to make money with little to no attempt to help users.

- Pages with extremely low or lowest quality MC.

- If a page is deliberately created with no MC, use the Lowest rating. Why would a page exist without MC? Pages with no MC are usually lack of purpose pages or deceptive pages.

- Webpages that are deliberately created with a bare minimum of MC, or with MC which is completely unhelpful for the purpose of the page, should be considered to have no MC

- Pages deliberately created with no MC should be rated Lowest.

- Important: The Lowest rating is appropriate if all or almost all of the MC on the page is copied with little or no time, effort, expertise, manual curation, or added value for users. Such pages should be rated Lowest, even if the page assigns credit for the content to another source. Important: The Lowest rating is appropriate if all or almost all of the MC on the page is copied with little or no time, effort, expertise, manual curation, or added value for users. Such pages should be rated Lowest, even if the page assigns credit for the content to another source.

- Pages on YMYL (Your Money Or Your Life Transaction pages) websites with completely inadequate or no website information.

- Pages on abandoned, hacked, or defaced websites.

- Pages or websites created with no expertise or pages that are highly untrustworthy, unreliable, unauthoritative, inaccurate, or misleading.

- Harmful or malicious pages or websites.

- Websites that have extremely negative or malicious reputations. Also use the Lowest rating for violations of the Google Webmaster Quality Guidelines. Finally, Lowest+ may be used both for pages with many low-quality characteristics and for pages whose lack of a single Page Quality characteristic makes you question the true purpose of the page. Important: Negative reputation is sufficient reason to give a page a Low quality rating. Evidence of truly malicious or fraudulent behavior warrants the Lowest rating.

- Deceptive pages or websites. Deceptive webpages appear to have a helpful purpose (the stated purpose), but are actually created for some other reason. Use the Lowest rating if a webpage page is deliberately created to deceive and potentially harm users in order to benefit the website.

- Some pages are designed to manipulate users into clicking on certain types of links through visual design elements, such as page layout, organization, link placement, font color, images, etc. We will consider these kinds of pages to have deceptive page design. Use the Lowest rating if the page is deliberately designed to manipulate users to click on Ads, monetized links, or suspect download links with little or no effort to provide helpful MC.

- Sometimes, pages just don’t “feel” trustworthy. Use the Lowest rating for any of the following: Pages or websites that you strongly suspect are scams

- Pages that ask for personal information without a legitimate reason (for example, pages which ask for name, birthdate, address, bank account, government ID number, etc.). Websites that “phish” for passwords to Facebook, Gmail, or other popular online services. Pages with suspicious download links, which may be malware.

- Use the Lowest rating for websites with extremely negative reputations.

Websites ‘Lacking Care and Maintenance’ Are Rated ‘Low Quality’.

Sometimes a website may seem a little neglected: links may be broken, images may not load, and content may feel stale or out-dated. If the website feels inadequately updated and inadequately maintained for its purpose, the Low rating is probably warranted.

“Broken” or Non-Functioning Pages Classed As Low Quality

I touched on 404 pages in my recent post about investigating why has a site lost traffic.

Google gives clear advice on creating useful 404 pages:

- Tell visitors clearly that the page they’re looking for can’t be found

- Use language that is friendly and inviting

- Make sure your 404 page uses the same look and feel (including navigation) as the rest of your site.

- Consider adding links to your most popular articles or posts, as well as a link to your site’s home page.

- Think about providing a way for users to report a broken link.

- Make sure that your webserver returns an actual 404 HTTP status code when a missing page is requested

Ratings for Pages with Error Messages or No MC

Google doesn’t want to index pages without a specific purpose or sufficient main content. A good 404 page and proper setup prevent a lot of this from happening in the first place.

Some pages load with content created by the webmaster, but have an error message or are missing MC. Pages may lack MC for various reasons. Sometimes, the page is “broken” and the content does not load properly or at all. Sometimes, the content is no longer available and the page displays an error message with this information. Many websites have a few “broken” or non-functioning pages. This is normal, and those individual non-functioning or broken pages on an otherwise maintained site should be rated Low quality. This is true even if other pages on the website are overall High or Highest quality.

Does Google programmatically look at 404 pages?

We are told, NO in a recent hangout – – but – in Quality Raters Guidelines “Users probably care a lot”.

Do 404 Errors in Search Console Hurt My Rankings?

404 errors on invalid URLs do not harm your site’s indexing or ranking in any way. JOHN MEULLER

It appears this isn’t a one size fits all answer. If you properly deal with mishandled 404 errors that have some link equity, you reconnect equity that was once lost – and this ‘backlink reclamation’ evidently has value.

The issue here is that Google introduces a lot of noise into that Crawl Errors report to make it unwieldy and not very user-friendly.

A lot of broken links Google tells you about can often be totally irrelevant and legacy issues. Google could make it instantly more valuable by telling us which 404s are linked to from only external websites.

Fortunately, you can find your own broken links on site using the myriad of SEO tools available.

I also prefer to use Analytics to look for broken backlinks on a site with some history of migrations, for instance.

John has clarified some of this before, although he is talking specifically (I think) about errors found by Google in Search Console (formerly Google Webmaster Tools):

- In some cases, crawl errors may come from a legitimate structural issue within your website or CMS. How do you tell? Double-check the origin of the crawl error. If there’s a broken link on your site, in your page’s static HTML, then that’s always worth fixing

- What about the funky URLs that are “clearly broken?” When our algorithms like your site, they may try to find more great content on it, for example by trying to discover new URLs in JavaScript. If we try those “URLs” and find a 404, that’s great and expected. We just don’t want to miss anything important

If you are making websites and want them to rank, the 2015 and 2014 Quality Raters Guidelines document is a great guide for Webmasters to avoid low-quality ratings and potentially avoid punishment algorithms.

Google Is Not Going To Rank Low-Quality Pages When It Has Better Options

If you have exact match instances of key-phrases on low-quality pages, mostly these pages won’t have all the compound ingredients it takes to rank high in Google in 2017.

I was working this, long before I understood it partially enough to write anything about it.

Here are a few examples of taking a standard page that did not rank for years and then turning it into a topic oriented resource page designed around a user’s intent:

Google, in many instances, would rather send long-tail search traffic, like users using mobile VOICE SEARCH, for instance, to high-quality pages ABOUT a concept/topic that explains relationships and connections between relevant sub-topics FIRST, rather than to only send that traffic to low-quality pages just because they have the exact phrase on the page.

Technical SEO

If you are doing a professional SEO audit for a real business, you are going to have to think like a Google Search Quality Rater AND a Google search engineer to provide real long term value to a client.

Google has a LONG list of technical requirements it advises you meet, on top of all the things it tells you NOT to do to optimise your website.

Meeting Google’s technical guidelines is no magic bullet to success – but failing to meet them can impact your rankings in the long run – and the odd technical issue can actually severely impact your entire site if rolled out across multiple pages.

The benefit of adhering to technical guidelines is often a second order benefit.

You don’t get penalised or filtered when others do. When others fall, you rise.

Mostly – individual technical issues will not be the reason you have ranking problems, but they still need to be addressed for any second order benefit they provide.

Conversely, sites that are not marked down are not demoted and so improve in rankings. Sites with higher rankings pick up more organic links, and this process can float a high-quality page quickly to the top of Google.

So – the sensible thing for any webmaster is to NOT give Google ANY reason to DEMOTE a site. Tick all the boxes Google tell you to tick.

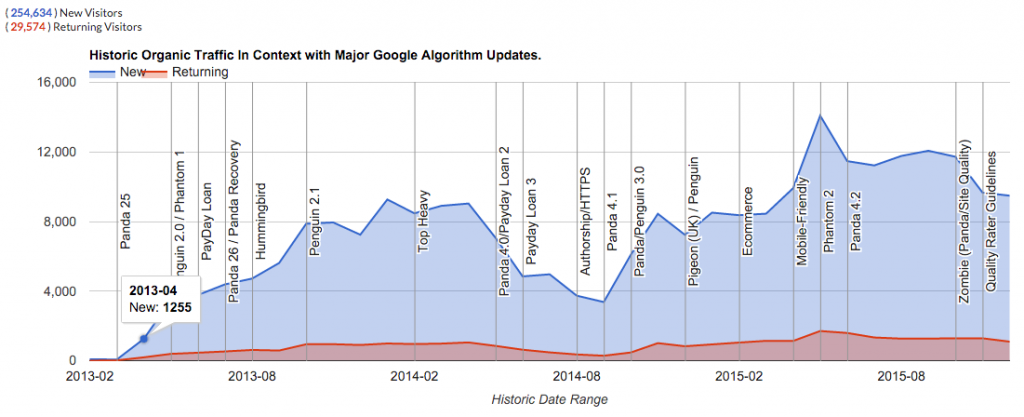

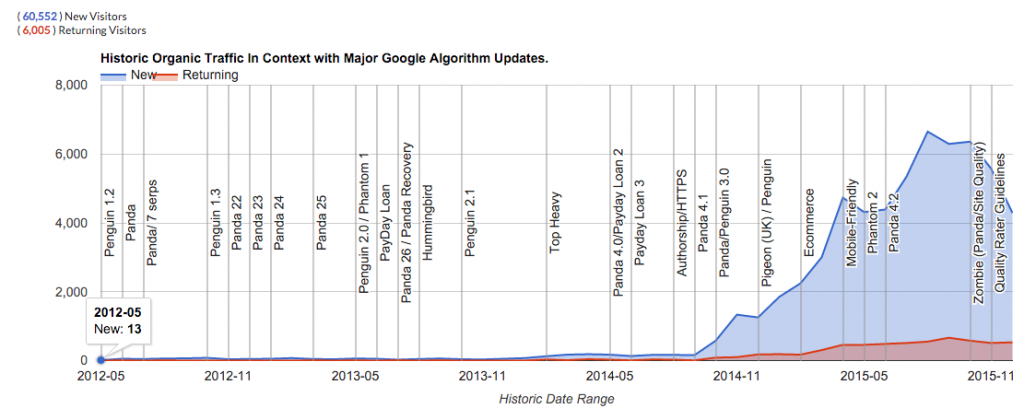

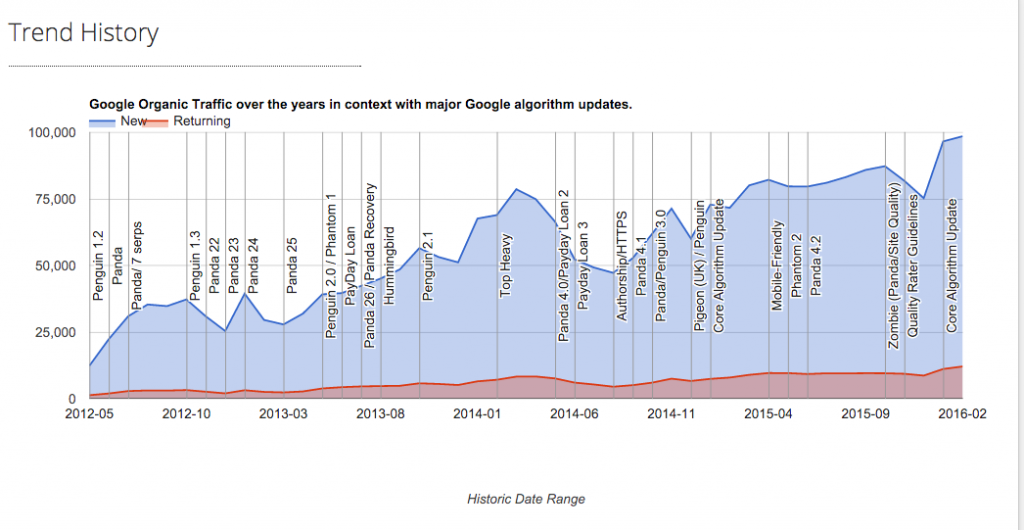

I have used this simple (but longer term) strategy to rank on page 1 or thereabouts for ‘SEO’ in the UK over the last few years, and drive 100 thousand relevant organic visitors to this site, every month, to only about 70 pages, without building any links over the last few years (and very much working on it part-time):

What Is Domain Authority?

Domain authority, or as Google calls it, ‘online business authority’, is an important ranking factor in Google. What is domain authority? Well, nobody knows exactly how Google calculates popularity, reputation, intent or trust, outside of Google, but when I write about domain authority I am generally thinking of sites that are popular, reputable and trusted – all of which can be faked, of course.

Most sites that have domain authority/online business authority have lots of links to them – that’s for sure – hence why link building has traditionally been so popular a tactic – and counting these links is generally how most 3rd party tools calculate it a pseudo domain authority score, too.

Massive domain authority and ranking ‘trust’ was in the past awarded to very successful sites that have gained a lot of links from credible sources, and other online business authorities too.

Amazon has a lot of online business authority…. (Official Google Webmaster Blog)

SEO more usually talk about domain trust and domain authority based on the number, type and quality of incoming links to a site.

Examples of trusted, authority domains include Wikipedia, the W3C and Apple. How do you become an OBA? Through building a killer online or offline brand or service with, usually, a lot of useful content on your site.

How do you take advantage of being an online business authority? Either you turn the site into an SEO Black Hole (only for the very biggest brands) or you pump out information – like all the time. On any subject. Because Google will rank it!

EXCEPT – If what you publish is deemed low quality and not suitable for your domain to have visibility on Google.

I think this ‘quality score’ Google has developed could be Google’s answer to this sort of historical domain authority abuse.

Can you (on a smaller scale in certain niches) mimic an online business authority by recognising what OBA do for Google, and why Google ranks these high in search result?. These provide THE service, THE content, THE experience. This takes a lot of work and a lot of time to create, or even mimic.

In fact, as an SEO, I honestly think the content route is the only sustainable way for the most businesses to try to achieve OBA at least in their niche or locale. I concede a little-focused link-building goes a long way to help, and you have certainly got to get out there and tell others about your site…

Have other relevant sites link to yours. Google Webmaster Guidelines

Brands are how you sort out the cesspool.

“Brands are the solution, not the problem,” Mr. Schmidt said. “Brands are how you sort out the cesspool.“

Google CEO Eric Schmidt said this. Reading between the lines, I’ve long thought this is good SEO advice.

If you are a ‘brand’ in your space or well-cited site, Google wants to rank your stuff at the top because it trusts you won’t spam it and fill results pages with crap and make Google look stupid.

That’s money just sitting on the table the way Google currently awards massive domain authority and trust to particular sites they rate highly.

Tip – Keep content within your topic, unless you are producing high-quality content, of course. (e.g. the algorithms detect no unnatural practices)

I am always thinking:

“how do I get links from big KNOWN sites to my site. Where is my next quality link coming from?”

Getting links from ‘Brands’ (or well-cited websites) in niches can mean ‘quality links’.

Easier said than done, for most, of course, but that is the point.

But the aim with your main site should always be to become an online brand.

Does Google Prefer Big Brands In Organic SERPs?

Well, yes. It’s hard to imagine that a system like Google’s was not designed exactly over the last few years to deliver the listings it does today – and it IS filled with a lot of pages that rank high LARGELY because the domain the content is on.

Big Brands have an inherent advantage in Google’s ecosystem, and it’s kind of a suck for small businesses. There are more small businesses than big brands for Google to get Adwords bucks out of, too.

That being said – small businesses can still succeed if they focus on a strategy based on depth, rather than breadth regarding how content is a structured page to page on a website.

Is Domain Age An Important Google Ranking Factor?

No, not in isolation.

Having a ten-year-old domain that Google knows nothing about is the same as having a brand new domain.

A 10-year-old site that’s continually cited by, year on year, the actions of other, more authoritative, and trusted sites? That’s valuable.

But that’s not the age of your website address ON IT”S OWN in-play as a ranking factor.

A one-year-old domain cited by authority sites is just as valuable if not more valuable than a ten-year-old domain with no links and no search-performance history.

Perhaps Domain age may come into play when other factors are considered – but I think Google works very much like this on all levels, with all ‘ranking factors’, and all ranking ‘conditions’.

I don’t think you can consider discovering ‘ranking factors’ without ‘ranking conditions’.

Other Ranking Factors:

- Domain age; (NOT ON IT”S OWN)

- The length of site domain registration; (I don’t see much benefit ON IT”S OWN even knowing “Valuable (legitimate) domains are often paid for several years in advance, while doorway (illegitimate) domains rarely are used for more than a year.”) – paying for a domain in advance just tells others you don’t want anyone else using this domain name, it is not much of an indication that you’re going to do something Google cares about).

- Domain registration information was hidden/anonymous; (possibly, under human review if OTHER CONDITIONS are met like looking like a spam site)

- Site top level domain (geographical focus, e.g. com versus co.uk); (YES)

- Site top level domain (e.g. .com versus .info); (DEPENDS)

- Sub domain or root domain? (DEPENDS)

- Domain past records (how often it changed IP); (DEPENDS)

- Domain past owners (how often the owner was changed) (DEPENDS)

- Keywords in the domain; (DEFINITELY – ESPECIALLY EXACT KEYWORD MATCH – although Google has a lot of filters that mute the performance of an exact match domain in 2017))

- Domain IP; (DEPENDS – for most, no)

- Domain IP neighbours; (DEPENDS – for most, no)

- Domain external mentions (non-linked) (I have no idea in 2017)

- Geo-targeting settings in Google Webmaster Tools (YES – of course)

Google Penalties For Unnatural Footprints

In 2017, you need to be aware that what works to improve your rank can also get you penalised (faster, and a lot more noticeable).

In particular, the Google web spam team is currently waging a PR war on sites that rely on unnatural links and other ‘manipulative’ tactics (and handing out severe penalties if it detects them). And that’s on top of many algorithms already designed to look for other manipulative tactics (like keyword stuffing or boilerplate spun text across pages).

Google is making sure it takes longer to see results from black and white hat SEO, and intent on ensuring a flux in its SERPs based largely on where the searcher is in the world at the time of the search, and where the business is located near to that searcher.

There are some things you cannot directly influence legitimately to improve your rankings, but there is plenty you CAN do to drive more Google traffic to a web page.

Ranking Factors

Google has HUNDREDS of ranking factors with signals that can change daily, weekly, monthly or yearly to help it work out where your page ranks in comparison to other competing pages in SERPs.

You will not ever find every ranking factor. Many ranking factors are on-page or on-site and others are off-page or off-site. Some ranking factors are based on where you are, or what you have searched for before.

I’ve been in online marketing for 15 years. In that time, a lot has changed. I’ve learned to focus on aspects that offer the greatest return on investment of your labour.

Learn SEO Basics….

Here are few simple SEO tips to begin with:

- If you are just starting out, don’t think you can fool Google about everything all the time. Google has VERY probably seen your tactics before. So, it’s best to keep your plan simple. GET RELEVANT. GET REPUTABLE. Aim for a healthy, satisfying visitor experience. If you are just starting out – you may as well learn how to do it within Google’s Webmaster Guidelines first. Make a decision, early, if you are going to follow Google’s guidelines, or not, and stick to it. Don’t be caught in the middle with an important project. Do not always follow the herd.

- If your aim is to deceive visitors from Google, in any way, Google is not your friend. Google is hardly your friend at any rate – but you don’t want it as your enemy. Google will send you lots of free traffic though if you manage to get to the top of search results, so perhaps they are not all that bad.

- A lot of optimisation techniques that are effective in boosting sites rankings in Google are against Google’s guidelines. For example, many links that may have once promoted you to the top of Google, may, in fact, today be hurting your site and its ability to rank high in Google. Keyword stuffing might be holding your page back…. You must be smart, and cautious, when it comes to building links to your site in a manner that Google *hopefully* won’t have too much trouble with, in the FUTURE. Because they will punish you in the future.

- Don’t expect to rank number 1 in any niche for a competition without a lot of investment, work. Don’t expect results overnight. Expecting too much too fast might get you in trouble with the spam team.

- You don’t pay anything to get into Google, Yahoo or Bing natural, or free listings. It’s common for the major search engines to find your website pretty quickly by themselves within a few days. This is made so much easier if your cms actually ‘pings’ search engines when you update content (via XML sitemaps or RSS for instance).

- To be listed and rank high in Google and other search engines, you really should consider and mostly abide by search engine rules and official guidelines for inclusion. With experience and a lot of observation, you can learn which rules can be bent, and which tactics are short term and perhaps, should be avoided.

- Google ranks websites (relevancy aside for a moment) by the number and quality of incoming links to a site from other websites (amongst hundreds of other metrics). Generally speaking, a link from a page to another page is viewed in Google “eyes” as a vote for that page the link points to. The more votes a page gets, the more trusted a page can become, and the higher Google will rank it – in theory. Rankings are HUGELY affected by how much Google ultimately trusts the DOMAIN the page is on. BACKLINKS (links from other websites – trump every other signal.)

- I’ve always thought if you are serious about ranking – do so with ORIGINAL COPY. It’s clear – search engines reward good content it hasn’t found before. It indexes it blisteringly fast, for a start (within a second, if your website isn’t penalised!). So – make sure each of your pages has enough text content you have written specifically for that page – and you won’t need to jump through hoops to get it ranking.

- If you have original, quality content on a site, you also have a chance of generating inbound quality links (IBL). If your content is found on other websites, you will find it hard to get links, and it probably will not rank very well as Google favours diversity in its results. If you have original content of sufficient quality on your site, you can then let authority websites – those with online business authority – know about it, and they might link to you – this is called a quality backlink.

- Search engines need to understand that ‘a link is a link’ that can be trusted. Links can be designed to be ignored by search engines with the rel nofollow attribute.

- Search engines can also find your site by other websites linking to it. You can also submit your site to search engines directly, but I haven’t submitted any site to a search engine in the last ten years – you probably don’t need to do that. If you have a new site, I would immediately register it with Google Webmaster Tools these days.

- Google and Bing use a crawler (Googlebot and Bingbot) that spiders the web looking for new links to find. These bots might find a link to your homepage somewhere on the web and then crawl and index the pages of your site if all your pages are linked together. If your website has an XML sitemap, for instance, Google will use that to include that content in its index. An XML sitemap is INCLUSIVE, not EXCLUSIVE. Google will crawl and index every single page on your site – even pages out with an XML sitemap.

- Many think Google will not allow new websites to rank well for competitive terms until the web address “ages” and acquires “trust” in Google – I think this depends on the quality of the incoming links. Sometimes your site will rank high for a while then disappears for months. A “honeymoon period” to give you a taste of Google traffic, no doubt.

- Google WILL classify your site when it crawls and indexes your site – and this classification can have a DRASTIC effect on your rankings – it’s important for Google to work out WHAT YOUR ULTIMATE INTENT IS – do you want to be classified as an affiliate site made ‘just for Google’, a domain holding page or a small business website with a real purpose? Ensure you don’t confuse Google by being explicit with all the signals you can – to show on your website you are a real business, and your INTENT is genuine – and even more important today – FOCUSED ON SATISFYING A VISITOR.

- NOTE – If a page exists only to make money from Google’s free traffic – Google calls this spam. I go into this more, later in this guide.

- The transparency you provide on your website in text and links about who you are, what you do, and how you’re rated on the web or as a business is one way that Google could use (algorithmically and manually) to ‘rate’ your website. Note that Google has a HUGE army of quality raters and at some point, they will be on your site if you get a lot of traffic from Google.

- To rank for specific keyword phrase searches, you usually need to have the keyword phrase or highly relevant words on your page (not necessarily all together, but it helps) or in links pointing to your page/site.

- Ultimately what you need to do to compete is largely dependent on what the competition for the term you are targeting is doing. You’ll need to at least mirror how hard they are competing if a better opportunity is hard to spot.

- As a result of other quality sites linking to your site, the site now has a certain amount of real PageRank that is shared with all the internal pages that make up your website that will in future help provide a signal to where this page ranks in the future.

- Yes, you need to build links to your site to acquire more PageRank, or Google ‘juice’ – or what we now call domain authority or trust. Google is a link-based search engine – it does not quite understand ‘good’ or ‘quality’ content – but it does understand ‘popular’ content. It can also usually identify poor, or THIN CONTENT – and it penalises your site for that – or – at least – it takes away the traffic you once had with an algorithm change. Google doesn’t like calling actions the take a ‘penalty’ – it doesn’t look good. They blame your ranking drops on their engineers getting better at identifying quality content or links, or the inverse – low-quality content and unnatural links. If they do take action your site for paid links – they call this a ‘Manual Action’ and you will get notified about it in Webmaster Tools if you sign up.

- Link building is not JUST a numbers game, though. One link from a “trusted authority” site in Google could be all you need to rank high in your niche. Of course, the more “trusted” links you attract, the more Google will trust your site. It is evident you need MULTIPLE trusted links from MULTIPLE trusted websites to get the most from Google in 2017.

- Try and get links within page text pointing to your site with relevant, or at least, natural looking, keywords in the text link – not, for instance, in blogrolls or site-wide links. Try to ensure the links are not obviously “machine generated” e.g. site-wide links on forums or directories. Get links from pages, that in turn, have a lot of links to them, and you will soon see benefits.

- Onsite, consider linking to your other pages by linking to pages within main content text. I usually only do this when it is relevant – often, I’ll link to relevant pages when the keyword is in the title elements of both pages. I don’t go in for auto-generating links at all. Google has penalised sites for using particular auto link plugins, for instance, so I avoid them.

- Linking to a page with actual key-phrases in the link help a great deal in all search engines when you want to feature for specific key terms. For example; “SEO Scotland” as opposed to https://www.hobo-web.co.uk or “click here“. Saying that – in 2017, Google is punishing manipulative anchor text very aggressively, so be sensible – and stick to brand mentions and plain URL links that build authority with less risk. I rarely ever optimise for grammatically incorrect terms these days (especially with links).

- I think the anchor text links in internal navigation is still valuable – but keep it natural. Google needs links to find and help categorise your pages. Don’t underestimate the value of a clever internal link keyword-rich architecture and be sure to understand for instance how many words Google counts in a link, but don’t overdo it. Too many links on a page could be seen as a poor user experience. Avoid lots of hidden links in your template navigation.

- Search engines like Google ‘spider’ or ‘crawl’ your entire site by following all the links on your site to new pages, much as a human would click on the links to your pages. Google will crawl and index your pages, and within a few days usually, begin to return your pages in SERPs.

- After a while, Google will know about your pages, and keep the ones it deems ‘useful’ – pages with original content, or pages with a lot of links to them. The rest will be de-indexed. Be careful – too many low-quality pages on your site will impact your overall site performance in Google. Google is on record talking about good and bad ratios of quality content to low-quality content.

- Ideally, you will have unique pages, with unique page titles and unique page meta descriptions . Google does not seem to use the meta description when ranking your page for specific keyword searches if not relevant and unless you are careful if you might end up just giving spammers free original text for their site and not yours once they scrape your descriptions and put the text in main content on their site. I don’t worry about meta keywords these days as Google and Bing say they either ignore them or use them as spam signals.

- Google will take some time to analyse your entire site, examining text content and links. This process is taking longer and longer these days but is ultimately determined by your domain reputation and real PageRank.

- If you have a lot of duplicate low-quality text already found by Googlebot on other websites it knows about; Google will ignore your page. If your site or page has spammy signals, Google will penalise it, sooner or later. If you have lots of these pages on your site – Google will ignore most of your website.

- You don’t need to keyword stuff your text to beat the competition.

- You optimise a page for more traffic by increasing the frequency of the desired key phrase, related key terms, co-occurring keywords and synonyms in links, page titles and text content. There is no ideal amount of text – no magic keyword density. Keyword stuffing is a tricky business, too, these days.

- I prefer to make sure I have as many UNIQUE relevant words on the page that make up as many relevant long tail queries as possible.

- If you link out to irrelevant sites, Google may ignore the page, too – but again, it depends on the site in question. Who you link to, or HOW you link to, REALLY DOES MATTER – I expect Google to use your linking practices as a potential means by which to classify your site. Affiliate sites, for example, don’t do well in Google these days without some good quality backlinks and higher quality pages.

- Many search engine marketers think who you link out to (and who links to you) helps determine a topical community of sites in any field or a hub of authority. Quite simply, you want to be in that hub, at the centre if possible (however unlikely), but at least in it. I like to think of this one as a good thing to remember in the future as search engines get even better at determining topical relevancy of pages, but I have never actually seen any granular ranking benefit (for the page in question) from linking out.

- I’ve got by, by thinking external links to other sites should probably be on single pages deeper in your site architecture, with the pages receiving all your Google Juice once it’s been “soaked up” by the higher pages in your site structure (the home page, your category pages). This tactic is old school but I still follow it. I don’t need to think you need to worry about that, too much, in 2017.

- Original content is king and will attract a “natural link growth” – in Google’s opinion. Too many incoming links too fast might devalue your site, but again. I usually err on the safe side – I always aimed for massive diversity in my links – to make them look ‘more natural’. Honestly, I go for natural links in 2017 full stop, for this website.

- Google can devalue whole sites, individual pages, template generated links and individual links if Google deems them “unnecessary” and a ‘poor user experience’.

- Google knows who links to you, the “quality” of those links, and whom you link to. These – and other factors – help ultimately determine where a page on your site ranks. To make it more confusing – the page that ranks on your site might not be the page you want to rank or even the page that determines your rankings for this term. Once Google has worked out your domain authority – sometimes it seems that the most relevant page on your site Google HAS NO ISSUE with will rank.

- Google decides which pages on your site are important or most relevant. You can help Google by linking to your important pages and ensuring at least one page is well optimised amongst the rest of your pages for your desired key phrase. Always remember Google does not want to rank ‘thin’ pages in results – any page you want to rank – should have all the things Google is looking for. That’s a lot these days!

- It is important you spread all that real ‘PageRank’ – or link equity – to your sales keyword/phrase rich sales pages, and as much remains to the rest of the site pages, so Google does not ‘demote’ pages into oblivion – or ‘supplemental results’ as we old timers knew them back in the day. Again – this is slightly old school – but it gets me by, even today.

- Consider linking to important pages on your site from your home page, and other important pages on your site.

- Focus on RELEVANCE first. Then, focus your marketing efforts and get REPUTABLE. This is the key to ranking ‘legitimately’ in Google in 2017.

- Every few months Google changes its algorithm to punish sloppy optimisation or industrial manipulation. Google Panda and Google Penguin are two such updates, but the important thing is to understand Google changes its algorithms constantly to control its listings pages (over 600 changes a year we are told).

- The art of rank modification is to rank without tripping these algorithms or getting flagged by a human reviewer – and that is tricky!

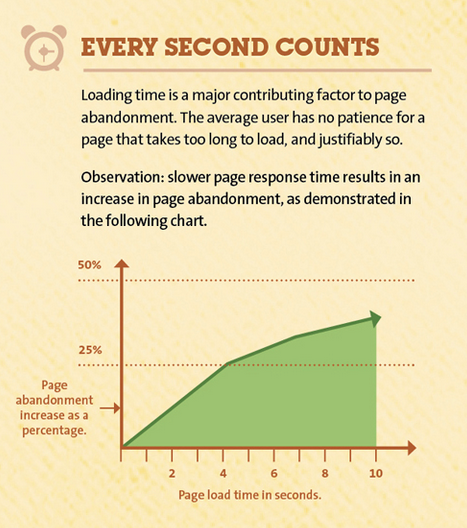

- Focus on improving website download speeds at all times. The web is changing very fast, and a fast website is a good user experience.

Welcome to the tightrope that is modern web optimisation.

Read on if you would like to learn how to SEO….

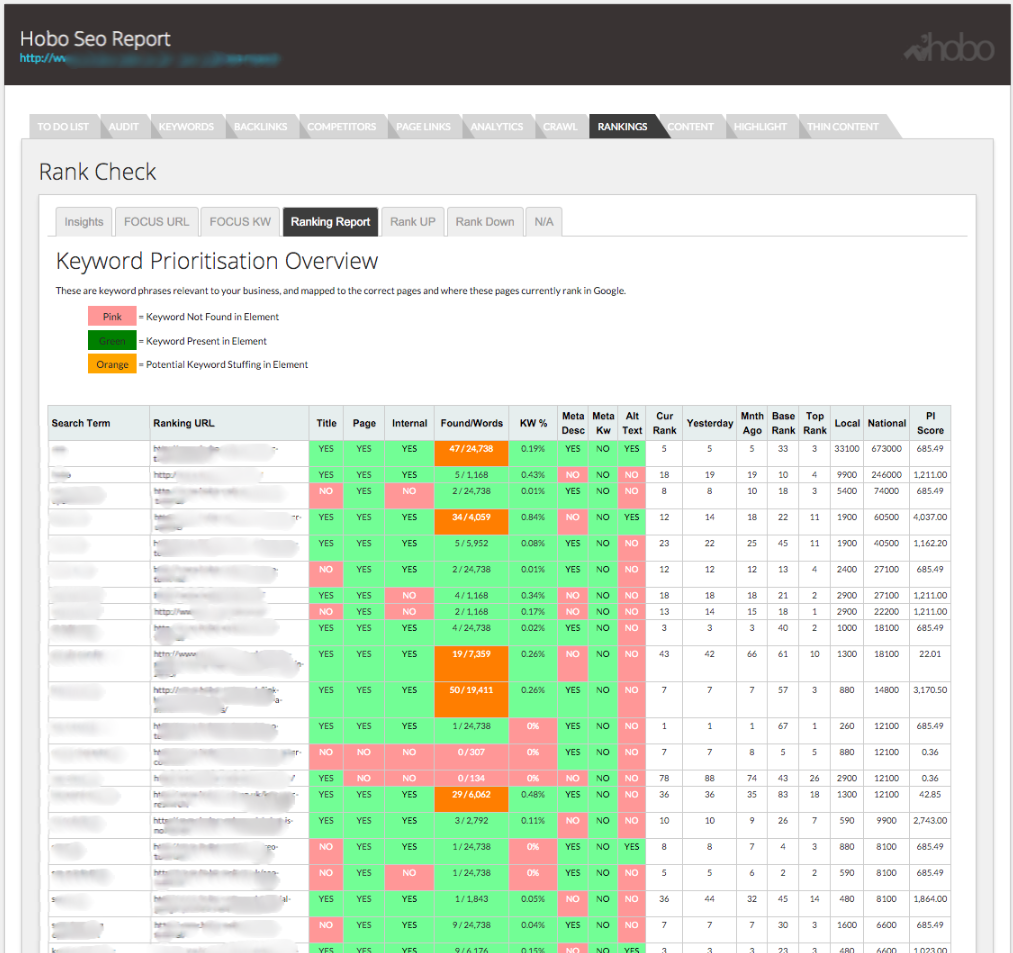

Keyword Research

The first step in any professional campaign is to do some keyword research and analysis.

Somebody asked me about this a simple white hat tactic and I think what is probably the simplest thing anyone can do that guarantees results.

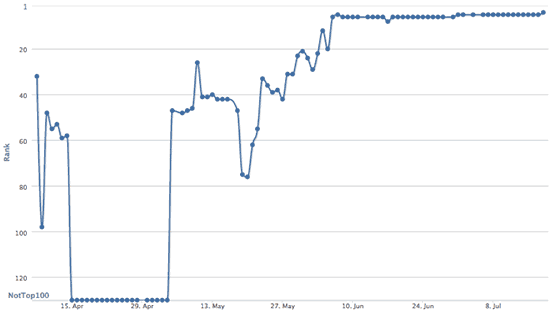

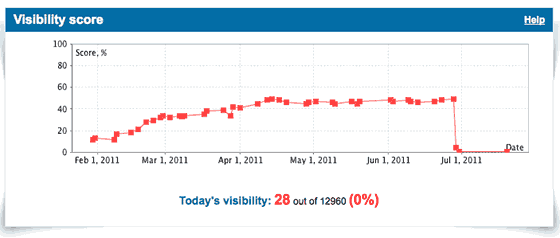

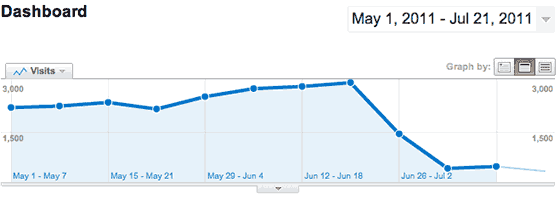

The chart above (from last year) illustrates a reasonably valuable 4-word term I noticed a page I had didn’t rank high in Google for, but I thought probably should and could rank for, with this simple technique.

I thought it as simple as an example to illustrate an aspect of onpage SEO or ‘rank modification’, that’s white hat, 100% Google friendly and never, ever going to cause you a problem with Google.

This ‘trick’ works with any keyword phrase, on any site, with obvious differing results based on the availability of competing pages in SERPs, and availability of content on your site.

The keyword phrase I am testing rankings for isn’t ON the page, and I did NOT add the key phrase…. or in incoming links, or using any technical tricks like redirects or any hidden technique, but as you can see from the chart, rankings seem to be going in the right direction.

You can profit from it if you know a little about how Google works (or seems to work, in many observations, over years, excluding when Google throws you a bone on synonyms. You can’t ever be 100% certain you know how Google works on any level, unless it’s data showing you’re wrong, of course.)

What did I do to rank number 1 from nowhere for that key phrase?

I added one keyword to the page in plain text because adding the actual ‘keyword phrase’ itself would have made my text read a bit keyword stuffed for other variations of the main term. It gets interesting if you do that to a lot of pages, and a lot of keyword phrases. The important thing is keyword research – and knowing which unique keywords to add.

This example illustrates a key to ‘relevance’ on a page, in a lot of instances, is a keyword.

The precise keyword.

Yes – plenty of other things can be happening at the same time. It’s hard to identify EXACTLY why Google ranks pages all the time…but you can COUNT on other things happening and just get on with what you can see works for you.

In a time of light optimisation, it’s useful to EARN a few terms you SHOULD rank for in simple ways that leave others wondering how you got it.

Of course, you can still keyword stuff a page, or still spam your link profile – but it is ‘light’ optimisation I am genuinely interested in testing on this site – how to get more with less – I think that’s the key to not tripping Google’s aggressive algorithms.

There are many tools on the web to help with basic keyword research (including the Google Keyword Planner tool and there are even more useful third party SEO tools to help you do this).

You can use many keyword research tools to identify quickly opportunities to get more traffic to a page.

I built my own:

Google Analytics Keyword ‘Not Provided.’

Google Analytics was the very best place to look at keyword opportunity for some (especially older) sites, but that all changed a few years back.

Google stopped telling us which keywords are sending traffic to our sites from the search engine back in October 2011, as part of privacy concerns for its users.

Google will now begin encrypting searches that people do by default, if they are logged into Google.com already through a secure connection. The change to SSL search also means that sites people visit after clicking on results at Google will no longer receive “referrer” data that reveals what those people searched for, except in the case of ads.

Google Analytics now instead displays – keyword “not provided“, instead.

In Google’s new system, referrer data will be blocked. This means site owners will begin to lose valuable data that they depend on, to understand how their sites are found through Google. They’ll still be able to tell that someone came from a Google search. They won’t, however, know what that search was. SearchEngineLand (A great source for Google industry news)

You can still get some of this data if you sign up for Google Webmaster Tools (and you can combine this in Google Analytics) but the data even there is limited and often not entirely the most accurate. The keyword data can be useful, though – and access to backlink data is essential these days.

If the website you are working on is an aged site – there’s probably a wealth of keyword data in Google Analytics:

Do Keywords In Bold Or Italic Help?

Some webmasters claim putting your keywords in bold or putting your keywords in italics is a beneficial ranking factor in terms of search engine optimizing a page.

It is essentially impossible to test this, and I think these days, Google could well be using this (and other easy to identify on page optimisation efforts) to determine what to punish a site for, not promote it in SERPs.

Any item you can ‘optimise’ on your page – Google can use this against you to filter you out of results.

I use bold or italics these days specifically for users.

I only use emphasis if it’s natural or this is really what I want to emphasise!

Do not tell Google what to filter you for that easily.

I think Google treats websites they trust far different to others in some respect.

That is, more trusted sites might get treated differently than untrusted sites.

Keep it simple, natural, useful and random.

How Many Words & Keywords Do I Use On A Page?

I get asked this all the time –

how much text do you put on a page to rank for a certain keyword?

The answer is there is no optimal amount of text per page, but how much text you’ll ‘need’ will be based on your DOMAIN AUTHORITY, your TOPICAL RELEVANCE and how much COMPETITION there is for that term, and HOW COMPETITIVE that competition is.

Instead of thinking about the quantity of the text, you should think more about the quality of the content on the page. Optimise this with searcher intent in mind. Well, that’s how I do it.

I don’t find that you need a minimum amount of words or text to rank in Google. I have seen pages with 50 words outrank pages with 100, 250, 500 or 1000 words. Then again I have seen pages with no text rank on nothing but inbound links or other ‘strategy’. In 2017, Google is a lot better at hiding away those pages, though.

At the moment, I prefer long form pages with a lot of text although I still rely heavily on keyword analysis to make my pages. The benefits of longer pages are that they are great for long tail key phrases.

Creating deep, information rich pages focus the mind when it comes to producing authoritative, useful content.

Every site is different. Some pages, for example, can get away with 50 words because of a good link profile and the domain it is hosted on. For me, the important thing is to make a page relevant to a user’s search query.

I don’t care how many words I achieve this with and often I need to experiment on a site I am unfamiliar with. After a while, you get an idea how much text you need to use to get a page on a certain domain into Google.

One thing to note – the more text you add to the page, as long as it is unique, keyword rich and relevant, the more that page will be rewarded with more visitors from Google.

There is no optimal number of words on a page for placement in Google. Every website – every page – is different from what I can see. Don’t worry too much about word count if your content is original and informative. Google will probably reward you on some level – at some point – if there is lots of unique text on all your pages.

What is The Perfect Keyword Density?

The short answer to this is – no.

There is no one-size-fits-all keyword density, no optimal percentage guaranteed to rank any page at number 1. However, I do know you can keyword stuff a page and trip a spam filter.

Most web optimisation professionals agree there is no ideal percent of keywords in a text to get a page to number 1 in Google. Search engines are not that easy to fool, although the key to success in many fields doing simple things well (or, at least, better than the competition).

I write natural page copy where possible always focused on the key terms – I never calculate density to identify the best % – there are way too many other things to work on. I have looked into this. If it looks natural, it’s ok with me.

I aim to include related terms, long-tail variants and synonyms in Primary Content – at least ONCE, as that is all some pages need.

Optimal keyword density is a myth, although there are many who would argue otherwise.

‘Things, Not Strings’

Google is better at working out what a page is about, and what it should be about to satisfy the intent of a searcher, and it isn’t relying only on keyword phrases on a page to do that anymore.

Google has a Knowledge Graph populated with NAMED ENTITIES and in certain circumstances, Google relies on such information to create SERPs (Search Engine Results Pages).

Google has plenty of options when rewriting the query in a contextual way, based on what you searched for previously, who you are, how you searched and where you are at the time of the search.

Can I Just Write Naturally and Rank High in Google?

Yes, you must write naturally (and succinctly) in 2017, but if you have no idea the keywords you are targeting, and no expertise in the topic, you will be left behind those that can access this experience.

You can just ‘write naturally’ and still rank, albeit for fewer keywords than you would have if you optimised the page.

There are too many competing pages targeting the top spots not to optimise your content.

Naturally, how much text you need to write, how much you need to work into it, and where you ultimately rank, is going to depend on the domain reputation of the site you are publishing the article on.

Do You Need Lots of Text To Rank Pages In Google?

User search intent is a way marketers describe what a user wants to accomplish when they perform a Google search.

SEOs have understood user search intent to fall broadly into the following categories and there is an excellent post on Moz about this.

- Transactional – The user wants to do something like buy, signup, register to complete a task they have in mind.

- Informational – The user wishes to learn something

- Navigational – The user knows where they are going

The Google human quality rater guidelines modify these to simpler constructs:

- Do

- Know

- Go

As long as you meet the user’s primary intent, you can do this with as few words as it takes to do so.

You do NOT need lots of text to rank in Google.

Optimise For User Intent & Satisfaction

When it comes to writing SEO-friendly text for Google, we must optimise for user intent, not simply what a user typed into Google.

Google will send people looking for information on a topic to the highest quality, relevant pages it has in its database, often BEFORE it relies on how Google ‘used‘ to work e.g. relying on finding near or exact match instances of a keyword phrase on any one page.

Google is constantly evolving to better understand the context and intent of user behaviour, and it doesn’t mind rewriting the query used to serve high-quality pages to users that comprehensively deliver on user satisfaction e.g. explore topics and concepts in a unique and satisfying way.

Of course, optimising for user intent, even in this fashion, is something a lot of marketers had been doing long before query rewriting and Google Hummingbird came along.

Optimising For ‘The Long Click’

When it comes to rating user satisfaction, there are a few theories doing the rounds at the moment that I think is sensible. Google could be tracking user satisfaction by proxy. When a user uses Google to search for something, user behaviour from that point on can be a proxy for the relevance and relative quality of the actual SERP.

What is a Long Click?

A user clicks a result and spends time on it, sometimes terminating the search.

What is a Short Click?

A user clicks a result and bounces back to the SERP, pogo-sticking between other results until a long click is observed. Google has this information if it wants to use it as a proxy for query satisfaction.

For more on this, I recommend this article on the time to long click.

Optimise Supplementary Content on the Page

Once you have the content, you need to think about supplementary content and secondary links that help users on their journey of discovery.

That content CAN be on links to your own content on other pages, but if you are really helping a user understand a topic – you should be LINKING OUT to other helpful resources e.g. other websites.A website that does not link out to ANY other website could be interpreted accurately to be at least, self-serving. I can’t think of a website that is the true end-point of the web.

A website that does not link out to ANY other website could be interpreted accurately to be at least, self-serving. I can’t think of a website that is the true end-point of the web.

- TASK – On informational pages, LINK OUT to related pages on other sites AND on other pages on your own website where RELEVANT

- TASK – For e-commerce pages, ADD RELATED PRODUCTS.

- TASK – Create In-depth Content Pieces

- TASK – Keep Content Up to Date, Minimise Ads, Maximise Conversion, Monitor For broken, or redirected links

- TASK – Assign in-depth content to an author with some online authority, or someone with displayable expertise on the subject

- TASK – If running a blog, first, clean it up. To avoid creating pages that might be considered thin content in 6 months, consider planning a wider content strategy. If you publish 30 ‘thinner’ pages about various aspects of a topic, you can then fold all this together in a single topic page centred page helping a user to understand something related to what you sell.

Page Title Element

<title>What Is The Best Title Tag For Google?</title>

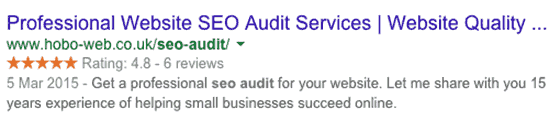

The page title tag (or HTML Title Element) is arguably the most important on page ranking factor (with regards to web page optimisation). Keywords in page titles can undeniably HELP your pages rank higher in Google results pages (SERPs). The page title is also often used by Google as the title of a search snippet link in search engine results pages.

For me, a perfect title tag in Google is dependant on a number of factors and I will lay down a couple below but I have since expanded page title advice on another page (link below);

- A page title that is highly relevant to the page it refers to will maximise its usability, search engine ranking performance and click through satisfaction rate. It will probably be displayed in a web browser’s window title bar, and in clickable search snippet links used by Google, Bing & other search engines. The title element is the “crown” of a web page with important keyword phrase featuring, AT LEAST, ONCE within it.